营销

-

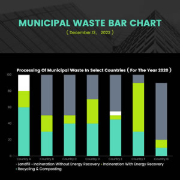

信息图

-

图表

1500 x 1500 px

-

海报

420 x 594 mm

-

传单

210 x 297 mm

-

商标

500 x 500 px

-

名片

3.5 x 2 in

-

宣传册

11 x 8.5 in

-

Facebook 广告

1200 x 628 px

-

通栏广告

728 x 90 px

-

菜单

8.5 x 11 inch

文档

社交媒体图形

-

YouTube 频道图片

2560 x 1440 px

-

Facebook 封面

851 x 315 px

-

YouTube 缩略图

1280 x 720 px

-

Twitter 横幅

1500 x 500 px

-

Tumblr 外观图片

3000 x 1055 px

-

邮件标头

600 x 200 px

-

Facebook 照片

940 x 788 px

-

Instagram 照片

1080 x 1080 px

-

Instagram 故事

1080 x 1920px

-

Pinterest 图片

735 x 1102 px